From Explainable AI to Causability

Welcome Students to the course LV 706.315 class of summer term 2019!

Syllabus-Explainable-AI-706315-2019 3 ECTS, Postgraduate Course

This page is valid as of February, 23, 2019, 17:00 CET

INTRODUCTION

6 min Youtube Video on explainable AI

COURSE at a glance

Whilst explainable AI is the engineering to enable human interpretability, causability is – similarly as usability – the measurement of causality and explainability –

see: https://onlinelibrary.wiley.com/doi/full/10.1002/widm.1312

MOTIVATION for this course

This course is an extension of the last years “Methods of explainable AI”, which was a natural offspring of the interactive Machine Learning (iML) courses and the decision making courses held over the last years. Today the most successful AI/machine learning models, e.g. deep learning (see the difference AI-ML-DL) are often considered to be “black-boxes” making it difficult to re-enact and to answer the question of why a certain machine decision has been reached. A general serious drawback is that statistical learning models have no explicit declarative knowledge representation. That means such models have enormous difficulty in generating underlying explanatory structures. This limits their ability to understand the context. Here the human-in-the-loop is exceptional good and as our goal is to augment the human intelligence with artificial intelligence we should rather speak of having a computer-in-the-loop. This is not necessary in some application domains (e.g. autonomous vehicles), but it is essential in our application domain, which is the medical domain (see our digital pathology project). A medical doctor is required on demand to retrace a result in an human understandable way. This calls not only for explainable models, but also for explanation interfaces (see AK HCI course). Interestingly, early AI systems (rule based systems) were explainiable to a certain extent within a well-defined problem space. Therefore this course will also provide a background on decision support systems from the early 1970ies (e.g. MYCIN, or GAMUTS of Radiology). In the class of 2019 we will focus even more on making inferences from observational data and reasoning under uncertainty which quickly bring us into the field of causality. Because in the biomedical domain (see e.g. our digital pathology project) we need to discover and understand unexpected interesting and relevant patterns in data to gain knowledge or for troubleshooting.

GOAL of this course

This graduate course follows a research-based teaching (RBT) approach and provides an overview of selected current state-of-the-art methods on making AI transparent re-traceable, re-enactable, understandable, consequently explainable. Note: We speak Python.

TARGET group of this course:

Research students of Computer Science particularly of the Holzinger group.

BACKGROUND

Explainability is motivated due to lacking transparency of so-called black-box approaches, which do not foster trust [6] and acceptance of AI generally and ML specifically. Rising legal and privacy aspects, e.g. with the new European General Data Protection Regulations (GDPR, which is now in effect since May 2018) will make black-box approaches difficult to use in Business, because they often are not able to explain why a machine decision has been made (see explainable AI).

Consequently, the field of Explainable AI is recently gaining international awareness and interest (see the news blog), because raising legal, ethical, and social aspects make it mandatory to enable – on request – a human to understand and to explain why a machine decision has been made [see Wikipedia on Explainable Artificial Intelligence]. Note: that does not mean that it is always necessary to explain everything and all – but to be able to explain it if necessary – e.g. for general understanding, for teaching, for learning, for research – or in court – or even on demand by a citizen – right of explanabiltiy.

HINT

If you need a statistics/probability refresher go to the Mini-Course MAKE-Decisions and review the statistics/probability primer: https://human-centered.ai/mini-course-make-decision-support/

Module 00 – Primer on Probability and Information Science (optional)

Keywords: probability, data, information, entropy measures

Topic 00: Mathematical Notations

Topic 01: Probability Distribution and Probability Density

Topic 02: Expectation and Expected Utility Theory

Topic 03: Joint Probability and Conditional Probability

Topic 04: Independent and Identically Distributed Data IIDD *)

Topic 05: Bayes and Laplace

Topic 06: Measuring Information: Kullback-Leibler Divergence and Entropy

Lecture slides 2×2 (10,300 kB): contact lecturer for slide deck

Recommened Reading for students:

[1] David J.C. Mackay 2003. Information theory, inference and learning algorithms, Boston (MA), Cambridge University Press. Online available: https://www.inference.org.uk/itprnn/book.html

Slides online available: https://www.inference.org.uk/itprnn/Slides.shtml

*) these are those we never have in the medical domain

Module 01 – Introduction

Keywords: HCI-KDD approach, integrative AI/ML, complexity, automatic ML, interactive ML, explainable AI

Topic 00: Reflection – follow up from Module 0 – dealing with probability and information

Topic 01: The HCI-KDD approach: Towards an integrative AI/ML ecosystem [1]

Topic 02: The complexity of the application area health informatics [2]

Topic 03: Probabilistic information

Topic 04: Automatic ML (aML)

Topic 05: Interactive ML (iML)

Topic 06: From interactive ML to explainable AI (ex-AI)

Lecture slides 2×2 (26,755 kB): contact lecturer for slide deck

[1] Andreas Holzinger (2013). Human–Computer Interaction and Knowledge Discovery (HCI-KDD): What is the benefit of bringing those two fields to work together? In: Cuzzocrea, Alfredo, Kittl, Christian, Simos, Dimitris E., Weippl, Edgar & Xu, Lida (eds.) Multidisciplinary Research and Practice for Information Systems, Springer Lecture Notes in Computer Science LNCS 8127. Heidelberg, Berlin, New York: Springer, pp. 319-328, doi:10.1007/978-3-642-40511-2_22.

Online available in the IFIP Hyper Articles en Ligne (HAL), INRIA: https://hal.archives-ouvertes.fr/hal-01506781/document

[2] Andreas Holzinger, Matthias Dehmer & Igor Jurisica 2014. Knowledge Discovery and interactive Data Mining in Bioinformatics – State-of-the-Art, future challenges and research directions. Springer/Nature BMC Bioinformatics, 15, (S6), I1, doi:10.1186/1471-2105-15-S6-I1. https://bmcbioinformatics.biomedcentral.com/articles/10.1186/1471-2105-15-S6-I1

Module 02 – Decision Making and Decision Support

Keywords: information, decision, decision making, medical action

Topic 00: Reflection – follow up from Module 1 – introduction

Topic 01: Medical action = Decision making

Topic 02: The underlying principles of intelligence and cognition

Topic 03: Human vs. Computer

Topic 04: Human Information Processing

Topic 05: Probabilistic decision theory

Topic 06: The problem of understanding context

Lecture slides 2×2 (31,120 kB): contact lecturer for slide set

Module 03 – From Expert Sytems to Explainable AI

Topic 00: Reflection – follow up from Module 02

Topic 01: Decision Support Systems (DSS)

Topic 02: Computers help making better decisions?

Topic 03: History of DSS = History of AI

Topic 04: Example: Towards Precision Medicine

Topic 05: Example: Case based Reasoning (CBR)

Topic 06: A few principles of causality

Lecture slides 2×2 (27,177 kB): contact lecturer for slide set

Module 04 – Coarse Overview of Explanation Methods and Transparence Algorithms

Keywords: Global explainability, local explainability, ante-hoc, post-hoc

Topic 00: Reflection – follow up from Module 3

Topic 01: Global vs. local explainability

Topic 02: Ante-hoc vs. Post-hoc interpretability

Topic 03: Examples for Ante-hoc: GAM, S-AOG, Hybrid models, iML

Topic 04: Examples for Post-hoc: LIME, BETA, LRP

Topic 05: Making neural networks transparent

Topic 06: Explanation Interfaces

Lecture slides 2×2 (33,887 kB): contact lecturer for slide set

Module 05 – Selected Methods of explainable-AI Part I

Keywords: LIME, BETA, LRP, Deep Taylor Decomposition, Prediction Difference Analysis

Topic 00: Reflection – follow up from Module 4

Topic 01: LIME (Local Interpretable Model Agnostic Explanations) – Ribeiro et al. (2016) [1]

Topic 02: BETA (Black Box Explanation through Transparent Approximation) – Lakkaraju et al. (2017) [2]

Topic 03: LRP (Layer-wise Relevance Propagation) – Bach et al. (2015) [3]

Topic 04: Deep Taylor Decomposition – Montavon et al. (2017) [4]

Topic 05: Prediction Difference Analysis – Zintgraf et al. (2017) [5]

Lecture slides 2×2 (15,521 kB): contact lecturer for slide deck

Reading for Students:

[1] Marco Tulio Ribeiro, Sameer Singh & Carlos Guestrin 2016. Model-Agnostic Interpretability of Machine Learning. arXiv:1606.05386.

[2] Himabindu Lakkaraju, Ece Kamar, Rich Caruana & Jure Leskovec 2017. Interpretable and Explorable Approximations of Black Box Models. arXiv:1707.01154.

[3] Sebastian Bach, Alexander Binder, Grégoire Montavon, Frederick Klauschen, Klaus-Robert Müller & Wojciech Samek 2015. On pixel-wise explanations for non-linear classifier decisions by layer-wise relevance propagation. PloS one, 10, (7), e0130140, doi:10.1371/journal.pone.0130140. NOTE: Sebastian BACH is now Sebastian LAPUSCHKIN

[4] Grégoire Montavon, Wojciech Samek & Klaus-Robert Müller 2017. Methods for interpreting and understanding deep neural networks. arXiv:1706.07979.

[5] Luisa M. Zintgraf, Taco S. Cohen, Tameem Adel & Max Welling 2017. Visualizing deep neural network decisions: Prediction difference analysis. arXiv:1702.04595.

Module 06 – Selected Methods of explainable-AI Part II

Topic 00: Reflection – follow up from Module 5

Topic 01: Visualizing Convolutional Neural Nets with Deconvolution – Zeiler & Fergus (2014) [1]

Topic 02: Inverting Convolutional Neural Networks – Mahendran & Vedaldi (2015) [2]

Topic 03: Guided Backpropagation – Springenberg et al. (2015) [3]

Topic 04: Deep Generator Networks – Nguyen et al. (2016) [4]

Topic 05: Testing with Concept Activation Vectors (TCAV) – Kim et al. (2018) [5]

Lecture slides 2×2 (11,944 kB): contact lecturer for slide deck

Reading for Students:

[1] Matthew D. Zeiler & Rob Fergus 2014. Visualizing and understanding convolutional networks. In: Fleet, D., Pajdla, T., Schiele, B. & Tuytelaars, T. (eds.) ECCV, Lecture Notes in Computer Science LNCS 8689. Cham: Springer, pp. 818-833, doi:10.1007/978-3-319-10590-1_53.

[2] Aravindh Mahendran & Andrea Vedaldi. Understanding deep image representations by inverting them. Proceedings of the IEEE conference on computer vision and pattern recognition, 2015. 5188-5196, doi:10.1109/CVPR.2015.7299155.

[3] Jost Tobias Springenberg, Alexey Dosovitskiy, Thomas Brox & Martin Riedmiller 2014. Striving for simplicity: The all convolutional net. arXiv:1412.6806.

[4] Anh Nguyen, Alexey Dosovitskiy, Jason Yosinski, Thomas Brox & Jeff Clune. Synthesizing the preferred inputs for neurons in neural networks via deep generator networks. Advances in Neural Information Processing Systems (NIPS 2016), 2016 Barcelona. 3387-3395. Read the reviews: https://media.nips.cc/nipsbooks/nipspapers/paper_files/nips29/reviews/1685.html

[5] Been Kim, Martin Wattenberg, Justin Gilmer, Carrie Cai, James Wexler & Fernanda Viegas. Interpretability beyond feature attribution: Quantitative testing with concept activation vectors (tcav). International Conference on Machine Learning, ICML 2018. 2673-2682, Stockholm.

Module 07 – Selected Methods of explainable-AI Part III

Topic 00: Reflection – follow up from Module 06

Topic 01: Understanding the Model: Feature Visualization – Erhan et al. (2009) [1]

Topic 02: Understanding the Model: Deep Visualization – Yoshynski et al (2015) [2]

Topic 03: Recursive Neureal Networks cell state analysis – Karpathy et al. (2015) [3]

Topic 04: Fitted Additive – Caruana (2015) [4]

Topic 05: Interactive Machine Learning with the human-in-the-loop – Holzinger et al. (2017) [5]

Lecture slides 2×2 (16,111 kB): contact lecturer for slide set

Reading for Students:

[1] Dumitru Erhan, Yoshua Bengio, Aaron Courville & Pascal Vincent 2009. Visualizing higher-layer features of a deep network. Technical Report 1341, Departement d’Informatique et Recherche Operationnelle, University of Montreal. [pdf available here]

[2] Jason Yosinski, Jeff Clune, Anh Nguyen, Thomas Fuchs & Hod Lipson 2015. Understanding neural networks through deep visualization. arXiv:1506.06579. Please watch this video: https://www.youtube.com/watch?v=AgkfIQ4IGaM

You can find the code here: https://yosinski.com/deepvis (cool stuff!)

[3] Andrej Karpathy, Justin Johnson & Li Fei-Fei 2015. Visualizing and understanding recurrent networks. arXiv:1506.02078. Code available here: https://github.com/karpathy/char-rnn (awesome!)

[4] Rich Caruana, Yin Lou, Johannes Gehrke, Paul Koch, Marc Sturm & Noemie Elhadad. Intelligible models for healthcare: Predicting pneumonia risk and hospital 30-day readmission. 21th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (KDD ’15), 2015 Sydney. ACM, 1721-1730, doi:10.1145/2783258.2788613.

[5] Andreas Holzinger, et al. 2018. Interactive machine learning: experimental evidence for the human in the algorithmic loop. Applied Intelligence, doi:10.1007/s10489-018-1361-5.

Module 08 – Selected Methods of explainable-AI Part IV

Topic 00: Reflection – follow up from Module 07

Topic 01: Sensitivity Analysis I – Simonyan et al. (2013) [1]

Topic 02: Sensitivity Analysis II – Baehrens et al (2009) [2]

Topic 03: Gradients General overview and usefulness for explaining

Topic 04: Gradients II: DeepLIFT Shrikumar et al. (2015) [4]

Topic 05: Gradients III: Grad-CAM Selvaraju et al. (2016) [5]

Topic 06: Gradients IV: Integrated Gradient Sundararajan et al. (2017) [6]

and please read the interesting paper on “Gradient vs. Decomposition” by Montavon et al. (2018) [7]

Lecture slides 2×2 (11,789 kB): contact lecturer for slide set

Reading for Students:

[1] Karen Simonyan, Andrea Vedaldi & Andrew Zisserman 2013. Deep inside convolutional networks: Visualising image classification models and saliency maps. arXiv:1312.6034.

[2] David Baehrens, Timon Schroeter, Stefan Harmeling, Motoaki Kawanabe, Katja Hansen & Klaus-Robert Mãžller 2010. How to explain individual classification decisions. Journal of Machine Learning Research, 11, (Jun), 1803-1831.

https://www.jmlr.org/papers/v11/baehrens10a.html

[3]

[4] Avanti Shrikumar, Peyton Greenside & Anshul Kundaje 2017. Learning important features through propagating activation differences. arXiv:1704.02685.

https://github.com/kundajelab/deeplift

Youtube Intro: https://www.youtube.com/watch?v=v8cxYjNZAXc&list=PLJLjQOkqSRTP3cLB2cOOi_bQFw6KPGKML

[5] Ramprasaath R. Selvaraju, Michael Cogswell, Abhishek Das, Ramakrishna Vedantam, Devi Parikh & Dhruv Batra. Grad-CAM: Visual Explanations from Deep Networks via Gradient-Based Localization. ICCV, 2017. 618-626.

Module CA – Causality Learning and Causality for Decision Support

Keywords: Causality, Graphical Causal Models, Bayesian Networks, Directly Acyclic Graphs

Topic 01: Making inferences from observational and unobservational variables and reasoning under uncertainty [1]

Topic 02: Factuals, Counterfactuals [2], Counterfactual Machine Learning and Causal Models [3]

Topic 03: Probabilistic Causality Examples

Topic 04: Causality in time series (Granger Causality)

Topic 05: Psychology of causation

Topic 06: Causal Inference in Machine Learning

Lecture slides 2×2 (15,544 kB): contact lecturer for slide deck

Reading for students:

[1] Judea Pearl 1988. Evidential reasoning under uncertainty. In: Shrobe, Howard E. (ed.) Exploring artificial intelligence. San Mateo (CA): Morgan Kaufmann, pp. 381-418.

[2] Matt J. Kusner, Joshua Loftus, Chris Russell & Ricardo Silva. Counterfactual fairness. In: Guyon, Isabelle, Luxburg, Ulrike Von, Bengio, Samy, Wallach, Hanna, Fergus, Rob & Vishwanathan, S.V.N., eds. Advances in Neural Information Processing Systems 30 (NIPS 2017), 2017. 4066-4076.

[3] Judea Pearl 2009. Causality: Models, Reasoning, and Inference (2nd Edition), Cambridge, Cambridge University Press.

[4] Judea Pearl 2018. Theoretical Impediments to Machine Learning With Seven Sparks from the Causal Revolution. arXiv:1801.04016.

Module TE – Testing and Evaluation of Machine Learning Algorithms

Keywords: performance, metrics, error, accuracy

Topic 01: Test data and training data quality

Topic 02: Performance measures (confusion matrix, ROC, AOC)

Topic 03: Hypothesis testing and estimating

Topic 04: Comparision of machine learning algorithms

Topic 05: “There-is-no-free-lunch” theorem

Topic 06: Measuring beyond accuracy (simplicity, scalability, interpretability, learnability, …)

Lecture slides 2×2 (12,756 kB): contact lecturer for slide set

Reading for students:

Module HC – Methods for measuring Human Intelligence

Keywords: performance, metrics, error, accuracy

Topic 01: Fundamentals to measure and evaluate human intelligence [1]

Topic 02: Low cost biometric technologies: 2D/3D cameras, eye-tracking, heart sensors

Topic 03: Advanced biometric technologies: EMG/ECG/EOG/PPG/GSR

Topic 04: Thinking-Aloud Technique

Topic 05: Microphone/Infrared sensor arrays

Topic 06: Affective computing: measuring emotion and stress [3]

Lecture slides 2×2 (17,111 kB): contact lecturer for slide set

Reading for students:

[1] José Hernández-Orallo 2017. The measure of all minds: evaluating natural and artificial intelligence, Cambridge University Press, doi:10.1017/9781316594179. Book Website: https://allminds.org

[2] Andrew T. Duchowski 2017. Eye tracking methodology: Theory and practice. Third Edition, Cham, Springer, doi:10.1007/978-3-319-57883-5.

[3] Christian Stickel, Martin Ebner, Silke Steinbach-Nordmann, Gig Searle & Andreas Holzinger 2009. Emotion Detection: Application of the Valence Arousal Space for Rapid Biological Usability Testing to Enhance Universal Access. In: Stephanidis, Constantine (ed.) Universal Access in Human-Computer Interaction. Addressing Diversity, Lecture Notes in Computer Science, LNCS 5614. Berlin, Heidelberg: Springer, pp. 615-624, doi:10.1007/978-3-642-02707-9_70.

Module TA – The Theory of Explanations

Keywords: Explanation

Topic 01: What is a good explanation?

Topic 02: Explaining Explanations

Topic 03: The limits of explainability [2]

Topic 04: How to measure the value of an explanation

Topic 05: Practical Examples from the medical domain

Lecture slides 2×2 (5,914 kB): contact lecturer for slide set

Reading for students:

[1] Zachary C. Lipton 2016. The mythos of model interpretability. arXiv:1606.03490.

[2] https://www.media.mit.edu/articles/the-limits-of-explainability/

Module ET – Ethical, Legal and Social Issues of Explainable AI

Keywords: Law, Ethics, Society, Governance, Compliance, Fairness, Accountability, Transparency

Topic 01: Definitions Automatic-Automated-Autonomous:

Human-out-of-loop, Human-still-in-control, Human-in-the-Loop, Computer-in-the-Loop

Topic 02: Legal accountability and Moral dilemmas

Topic 03: Ethical Alogorithms and the prove of explanations (truth vs. trust)

Topic 04: Responsible AI [2]

Topic 05: Explaining Explanations and the GDPR

Lecture slides 2×2 (7,689 kB): contact lecturer for slide deck

Reading for students:

[1] A very valuable ressource can be found here in the future of privacy forum:

https://fpf.org/artificial-intelligence-and-machine-learning-ethics-governance-and-compliance-resources/

[2] Ronald Stamper 1988. Pathologies of AI: Responsible use of artificial intelligence in professional work. AI & society, 2, (1), 3-16, doi: 10.1007/BF01891439.

Zoo of methods of explainable AI (listed alphabetically without any preference)

- BETA = Black Box Explanation through Transparent Approximation, a post-hoc explainability method developed by Lakkarju, Bach & Leskovec (2016) it learns two-level decision sets, where each rule explains the model behaviour. Himabindu Lakkaraju, Ece Kamar, Rich Caruana & Jure Leskovec 2017. Interpretable and Explorable Approximations of Black Box Models. arXiv:1707.01154.

- Deep Taylor Decomposition = Grégoire Montavon, Wojciech Samek & Klaus-Robert Müller 2017. Methods for interpreting and understanding deep neural networks. arXiv:1706.07979.

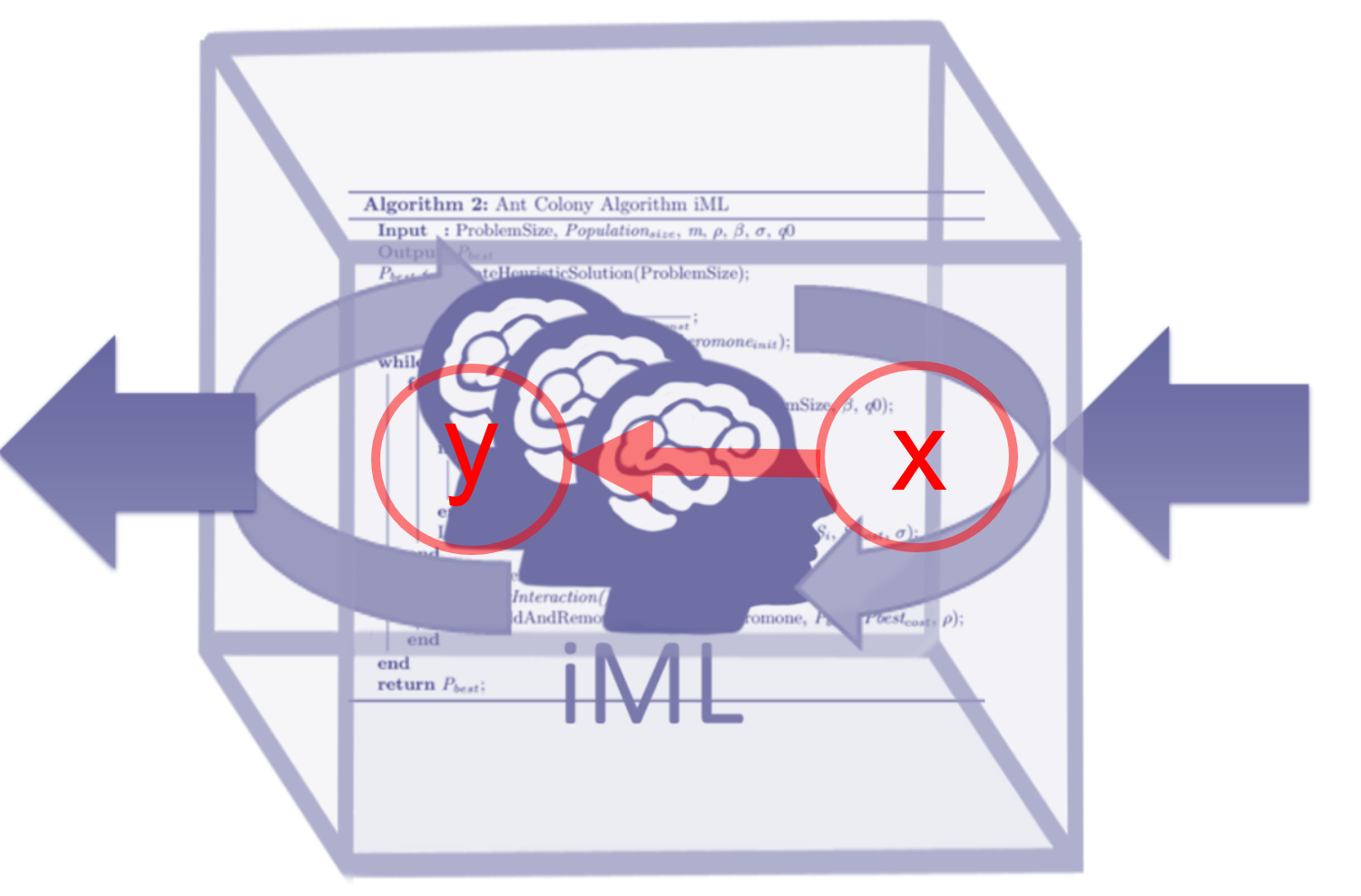

- iML = interactive Machine Learning with the Human-in-the-Loop is a versatile ante-hoc method for making use of the contextual understanding of a human expert complementing the machine learning alogrihtms; Andreas Holzinger et al. 2018. Interactive machine learning: experimental evidence for the human in the algorithmic loop. Springer/Nature Applied Intelligence, doi:10.1007/s10489-018-1361-5.

- LIME = Local Interpretable Model-Agnostic Explanations is a universally useable tool; Marco Tulio Ribeiro, Sameer Singh & Carlos Guestrin 2016. Model-Agnostic Interpretability of Machine Learning. arXiv:1606.05386.

- LRP = Layer-wise Relevance Propagation is a post-hoc method most suitable for deep neural networks; Sebastian Bach, Alexander Binder, Grégoire Montavon, Frederick Klauschen, Klaus-Robert Müller & Wojciech Samek 2015. On pixel-wise explanations for non-linear classifier decisions by layer-wise relevance propagation. PloS one, 10, (7), e0130140, doi:10.1371/journal.pone.0130140. NOTE: Sebastian BACH is now Sebastian LAPUSCHKIN

References from own work (references to related work will be given within the course):

[1] Andreas Holzinger, Chris Biemann, Constantinos S. Pattichis & Douglas B. Kell (2017). What do we need to build explainable AI systems for the medical domain? arXiv:1712.09923. https://arxiv.org/abs/1712.09923

[2] Andreas Holzinger, Bernd Malle, Peter Kieseberg, Peter M. Roth, Heimo Müller, Robert Reihs & Kurt Zatloukal (2017). Towards the Augmented Pathologist: Challenges of Explainable-AI in Digital Pathology. arXiv:1712.06657. https://arxiv.org/abs/1712.06657

[3] Andreas Holzinger, Markus Plass, Katharina Holzinger, Gloria Cerasela Crisan, Camelia-M. Pintea & Vasile Palade (2017). A glass-box interactive machine learning approach for solving NP-hard problems with the human-in-the-loop. arXiv:1708.01104.

[4] Andreas Holzinger (2016). Interactive Machine Learning for Health Informatics: When do we need the human-in-the-loop? Brain Informatics, 3, (2), 119-131, doi:10.1007/s40708-016-0042-6.

[5] Andreas Holzinger (2018). Explainable AI (ex-AI). Informatik-Spektrum, 41, (2), 138-143, doi:10.1007/s00287-018-1102-5.

https://link.springer.com/article/10.1007/s00287-018-1102-5

[6] Katharina Holzinger, Klaus Mak, Peter Kieseberg & Andreas Holzinger 2018. Can we trust Machine Learning Results? Artificial Intelligence in Safety-Critical decision Support. ERCIM News, 112, (1), 42-43.

https://ercim-news.ercim.eu/en112/r-i/can-we-trust-machine-learning-results-artificial-intelligence-in-safety-critical-decision-support

[7] Andreas Holzinger, et al. 2018. Interactive machine learning: experimental evidence for the human in the algorithmic loop. Applied Intelligence, doi:10.1007/s10489-018-1361-5.

Mini Student’s Glossary:

Ante-hoc Explainability (AHE) := such models are interpretable by design, e.g. glass-box approaches; typical examples include linear regression, decision trees/lists, random forests, Naive Bayes and fuzzy inference systems; or GAMs, Stochastic AOGs, and deep symbolic networks; they have a long tradition and can be designed from expert knowledge or from data and are useful as framework for the interaction between human knowledge and hidden knowledge in the data.

BETA := Black Box Explanation through Transparent Approximation, developed by Lakkarju, Bach & Leskovec (2016) it learns two-level decision sets, where each rule explains the model behaviour.

Decomposition := process of resolving relationships into the consituent components (hopefully representing the relevant interest). Highly theoretical, because in real-world this is hard due to the complexity (e.g. noise) and untraceable imponderabilities on our observations.

Explainability := motivated by the opaqueness of so called “black-box” approaches it is the ability to provide an explanation on why a machine decision has been reached (e.g. why is it a cat what the deep network recognized). Finding an appropriate explanation is difficult, because this needs understanding the context and providing a description of causality and consequences of a given fact. (German: Erklärbarkeit; siehe auch: Verstehbarkeit, Nachvollziehbarkeit, Zurückverfolgbarkeit, Transparenz)

Explanation := set of statements to describe a given set of facts to clarify causality, context and consequences thereof and is a core topic of knowledge discovery involving “why” questionss (“Why is this a cat?”). (German: Erklärung, Begründung)

Explanatory power := is the ability of a set hypothesis to effectively explain the subject matter it pertains to (opposite: explanatory impotence).

European General Data Protection Regulation (EU GDPR) := Regulation EU 2016/679 – see the EUR-Lex 32016R0679 , will make black-box approaches difficult to use, because they often are not able to explain why a decision has been made (see explainable AI).

Gaussian Process (GP) := collection of stochastic variables indexed by time or space so that each of them constitute a multidimensional Gaussian distribution; provides a probabilistic approach to learning in kernel machines (See: Carl Edward Rasmussen & Christopher K.I. Williams 2006. Gaussian processes for machine learning, Cambridge (MA), MIT Press); this can be used for explanations. (see also: Visual Exploration Gaussian)

Gradient := a vector providing the direction of maximum rate of change.

Interactive Machine Learning (iML) := machine learning algorithms which can interact with – partly human – agents and can optimize its learning behaviour trough this interaction. Holzinger, A. 2016. Interactive Machine Learning for Health Informatics: When do we need the human-in-the-loop? Brain Informatics (BRIN), 3, (2), 119-131.

Inverse Probability:= an older term for the probability distribution of an unobserved variable, and was described by De Morgan 1837, in reference to Laplace’s (1774) method of probability.

Kernel := class of algorithms for pattern analysis e.g. support vector machine (SVM); very useful for explainable AI

Kernel trick := transforming data into another dimension that has a clear dividing margin between the classes

Post-hoc Explainability (PHE) := such models are designed for interpreting black-box models and provide local explanations for a specific decision and re-enact on request, typical examples include LIME, BETA, LRP, or Local Gradient Explanation Vectors, prediction decomposition or simply feature selection.

Preference learning (PL) := concerns problems in learning to rank, i.e. learning a predictive preference model from observed preference information, e.g. with label ranking, instance ranking, or object ranking. Fürnkranz, J., Hüllermeier, E., Cheng, W. & Park, S.-H. 2012. Preference-based reinforcement learning: a formal framework and a policy iteration algorithm. Machine Learning, 89, (1-2), 123-156.

Multi-Agent Systems (MAS) := include collections of several independent agents, could also be a mixture of computer agents and human agents. An exellent pointer of the later one is: Jennings, N. R., Moreau, L., Nicholson, D., Ramchurn, S. D., Roberts, S., Rodden, T. & Rogers, A. 2014. On human-agent collectives. Communications of the ACM, 80-88.

Transfer Learning (TL) := The ability of an algorithm to recognize and apply knowledge and skills learned in previous tasks to novel tasks or new domains, which share some commonality. Central question: Given a target task, how do we identify the commonality between the task and previous tasks, and transfer the knowledge from the previous tasks to the target one?

Pan, S. J. & Yang, Q. 2010. A Survey on Transfer Learning. IEEE Transactions on Knowledge and Data Engineering, 22, (10), 1345-1359, doi:10.1109/tkde.2009.191.

Pointers:

- Past Event: Deadline: July, 2, 2018: 2nd International Workshop on Interactive Adaptive Learning (IAL2018), Co-Located With The European Conference on Machine Learning and Principles and Practice of Knowledge Discovery (ECML PKDD 2018),10-14 September 2018 – Dublin (Ireland)

https://www.ies.uni-kassel.de/p/ial2018/index.html - Past Event: Deadline: June,11, 2018: Workshop on Interpretability of Machine Intelligence in Medical Image Computing

at MICCAI 2018, September, 16, 2018

https://imimic.bitbucket.io - Past Event: Deadline: May, 7, 2018, MAKE-Explainable AI (MAKE-exAI) workshop on explainable Artificial Intelligence

at the CD-MAKE 2018 conference, Hamburg, August 27-30, 2018

https://cd-make.net/special-sessions/make-explainable-ai - Past Event: NIPS 2017 Symposium “Interpretable Machine Learning” (December, 7, 2017): https://interpretable.ml/

https://arxiv.org/html/1711.09889

organized by Andrew G. WILSON, Cornell University; Jason YOSINSKI, Uber AI Labs; Patrice SIMARD, Microsoft Research; Rich CARUANA, Cornell University, William HERLANDS, Carnegie Mellon University

Selection of important People relevant for ex-AI (incomplete, ordered by historic timeline)

BAYES, Thomas (1702-1761) gave a straightforward definition of probability [Wikipedia]

HUME, David (1711-1776) stated that causation is a matter of perception; he argued that inductive reasoning and belief in causality cannot be justified rationally; our trust in causality and induction results from custom and mental habit [Wikipedia]

PRICE, Richard (1723-1791) edited and commented the work of Thomas Bayes in 1763 [Wikipedia]

LAPLACE, Pierre-Simon, Marquis de (1749-1827) developed the Bayesian interpretation of probability [Wikipedia]

GAUSS, Carl Friedrich (1777-1855) with contributions from Laplace derives the normal distribution (Gauss bell curve) [Wikipedia]

MARKOV, Andrey (1856-1922) worked on stochastic processes and on what is known now as Markov Chains. [Wikipedia]

TUKEY, John Wilder (1915-2000) suggested in 1962 together with Frederick Mosteller the name “data analysis” for computational statistical sciences, which became much later the name data science [Wikipedia]

PEARL, Judea (1936 – ) is the Turing Awardee for pionieering in Bayesian networks and probabilistic approaches to AI [Wikipedia]

Antonyms (incomplete)

- ante-hoc <> post-hoc

- big data sets <> small data sets

- certain <> uncertain

- correlation <> causality

- comprehensible <> incomprehensible

- confident <> doubtful

- discriminative <> generative

- ethical <> unethical

- explainable <> obscure

- Frequentist <> Bayesian

- glass box <> black box

- Independent identical distributed data (IID-Data) <>non independent identical distributed data (non-IID, real-world data)

- intelligible <> unintelligible

- legal <> illegal

- legitimate <> illegitimate

- low dimensional <> high dimensional

- underfitting <> overfitting

- objective <> subjective

- parametric <> non-parametric

- predictable <> unpredictable

- reliable <> unreliable

- repeatable <> unrepeatable

- replicable <> not replicable

- re-traceable <> untraceable

- supervised <> unsupervised

- sure <> unsure

- transparent <> opaque

- trustworthy <> untrustworthy

- truthful <> untruthful

- verifiable <> non-verifiable (undetectable)